The TikTok Files

How social media companies experiment on human beings

Update: Trump signed an executive order postponing the TikTok ban for 75 days, stating that his administration would “pursue a resolution that protects national security while saving a platform used by 170 million Americans.”

On January 17, the Supreme Court upheld a decision by the Justice Department to ban TikTok from the United States. The ban, long overdue, is the fruit of much controversy over data and privacy concerns.

But there is a far more fundamental reason that we should feel, if not happy, then relieved about TikTok’s ban. As Jonathan Haidt noted in a long essay for his Substack, the company represents everything wrong with social media. Microblogging sites (Twitter) and photo-sharing apps (Instagram, Snapchat) are slow to enforce age restrictions, accountability features, or anything that will hurt their profitability, even though, privately, they admit their products are harmful for developing minds. As these companies incorporate addictive features like infinite scroll, reels, and “like” counters into their products, they hide what such rollouts entail: more phone-addicted teenagers who suffer from social comparison, depression, anxiety, and body dysmorphia. TikTok elevated this deception to an art form.

This essay exists to shed light on that deception, how it should frame our reaction to the site’s banishment, and, most importantly, why TikTok’s abuses should inform our view of social media writ large. The fricassee at TikTok is not unique, but merely a more extreme version of what goes on at Instagram, Facebook, and Twitter. And just as the harms posed by TikTok are integral to its very nature, so too the harms of social media cannot be divorced from the nature of that media.

TikTok’s victims

With 170 million American users, China-based TikTok owns a significant portion of the American Attention Span. That ownership is concentrated among teenage users (13-17 years old), 16% of whom say they use TikTok “almost constantly”, according to polling by the Pew Research Center. Yet for the amount of time they use the app, American teens sure hate TikTok–when asked on surveys, 47% of young users wish it “had never been invented.”

A series of depositions by state courts revealed a trove of internal documents and text messages exposing the inner thoughts and ruminations of TikTok employees. Despite having nothing to do with the privacy concerns at issue, the briefs submitted by the Attorneys General offices of Nevada, New York, Kentucky, and Utah have caused many to doubt whether social media, as such, deserves a place in American life.1

According to internal documents, TikTok developers knew their app was designed for compulsive use, yet didn’t seem to care, so long as they added to their DAUs (Daily Active Users). They found the American teenager to be a perfect fit for their designs. The Kentucky brief quotes one TikTok document as saying: “It’s better to have young people as an early adopter, especially the teenagers in the U.S. Why? They [sic] got a lot of time.” Another internal memo read: “Teenagers in the U.S. are a golden audience… if you look at China the teenage culture doesn’t exist–the teens are super busy in school studying for tests, so they don’t have the time and luxury to play social media apps.”2

Thusly, TikTok began an experiment on American kids and teens that has just now ended. They leave behind a trail of destruction—addiction, mental illness, and loss of innocence.

Addiction

Addiction is a fact of life on TikTok, a site dominated by infinite streams of inane, unrelated 30-second videos. TikTok employees knew their app was causing problems for millions of users, yet continued to make it more addictive anyway. One employee put it this way:3

The reason kids watch TikTok is because the algo[rithm] is really good… But I think we need to be cognizant of what it might mean for other opportunities. And when I say opportunities, I literally mean sleep, and eating, and moving around the room, and looking at somebody in the eyes.

Another TikTok memo states that “the product… has baked into it compulsive use.”4 Time and again, TikTok employees admitted that the sole purpose of their app was to get users to use it for as long as possible, side effects be damned. Young users are ideal for such retention because their prefrontal cortex is still developing and minors, by TikTok’s own admittance, “do not have executive function to control their screen time.”5 Yet TikTok employees see phone addiction as a sign of success. From the Nevada brief:6

In a “TikTok Strategy” presentation, Defendants celebrated the fact that users spend inordinate amounts of time on the platform. “TikTok is in most people’s lives like this,” Defendants explained, referring to online posts that read, “go on tiktok for 5 mins and 3 hours have passed” and “my night routine: watch 3 hours of tiktok videos, try to follow the dance steps, realise u suck at dancing n cry about it, continue watching tiktok videos, sleep.”

TikTok also leverages the fact that puberty is a time of identity-formation, often involving peers. Humans are mimetic; we learn by watching what others are doing. By making TikTok an indelible part of teen identity-formation, developers ensure that users are torn between staying culturally relevant and remaining offline. Alexandra Evans—a behavioral specialist turned TikTok exec—articulated a design strategy used by every social media company: “Persuasive design strategies exploit the natural human desire to be social and popular, by taking advantage of an individual’s fear of not being social and popular in order to extend their online use.”7

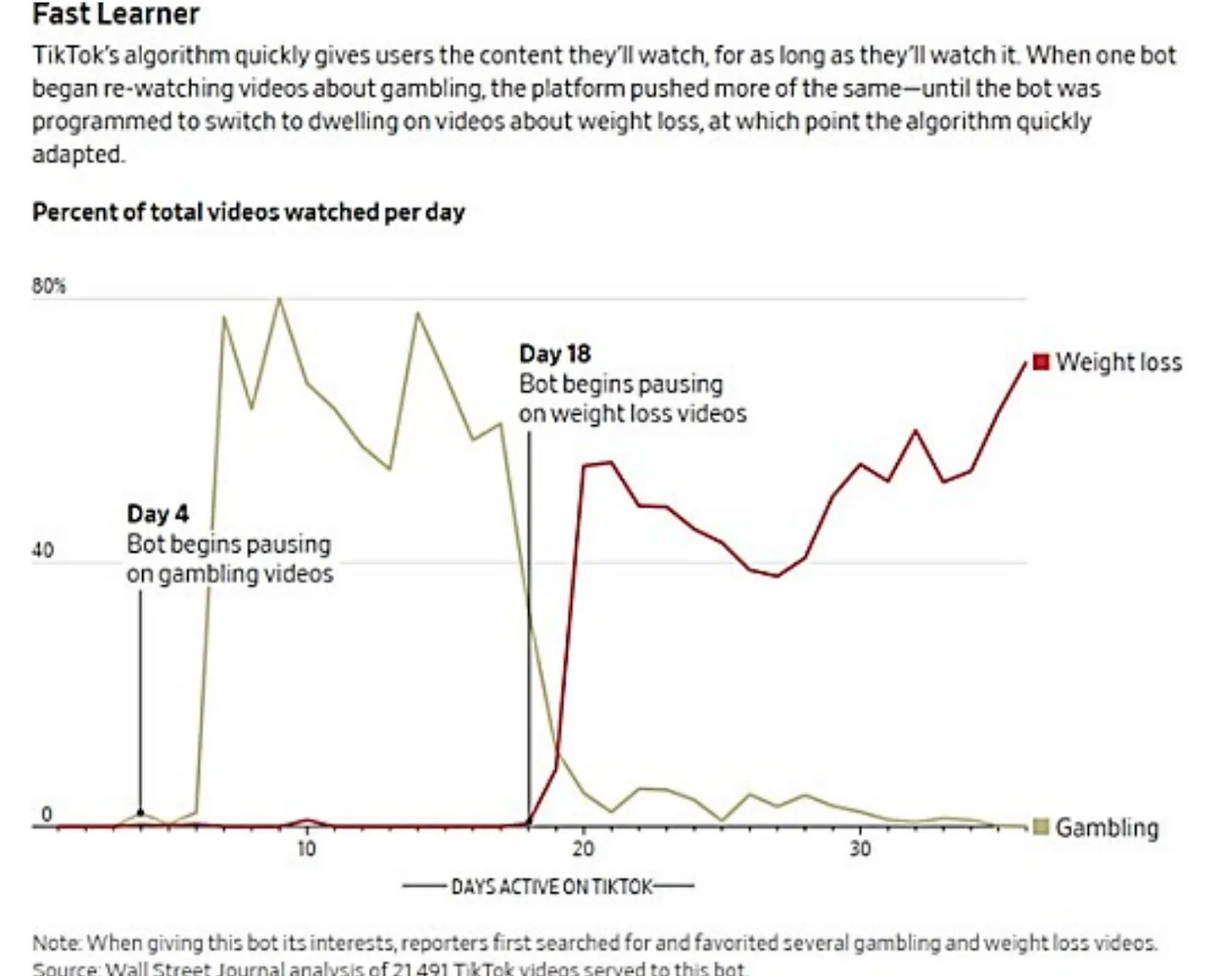

Driven by the need to stay culturally relevant, users find themselves, in the words of another TikTok memo, “trapped in a rabbit hole of what our algorithm thinks they like.”8 Such rabbit holes are the stuff of addiction, resulting in a poorer quality of life. In the words of the Nevada brief:9

Another internal report based on in-depth interviews with TikTok users found that overuse of TikTok caused “negative emotions,” “interfered with [users’] obligations and productivity,” and led to “negative impacts . . . on their lives,” including “lost sleep, missed deadlines, poor school performance, running late, etc.”

This is why when users are asked the dollar amount they would have to be paid to quit TikTok, the average response is $50; but when asked what they would have to be paid if all their peers were persuaded to quit the app, the average answer is less than zero. In other words, provided their peers were to quit TikTok, most users would pay money to never use TikTok again.10

Indeed, TikTok is designed to coerce as many people into using it for as long as possible–and it reinforces its influence through its cultural relevance. Meaning that even those who want to break free from its clutches find themselves unable to do so for fear of missing out.

Mental illness

It takes concerted effort to use TikTok without becoming a compulsive user, and it’s even more difficult to be a compulsive user without experiencing a low-grade fever of anxiety, depression, or brain fog (or some combination of the three). What else can be expected from scrolling through a meaningless welter of videos for hours every day?11

Internally, TikTok employees take such ill effects for granted. They themselves recognize that compulsive usage “correlates with a slew of negative mental health effects like loss of analytical skills, memory formation, contextual thinking, conversational depth, empathy, and increased anxiety.”12

On TikTok, accounts like “painhub” and “sadnotes” proliferate, where users can engage in depressive though-sharing and float their suicidal ideations without fear of judgement. One post cited by Kentucky investigators is telling: “If you could kill yourself without hurting anyone, would you?”13 Investigators in the Nebraska case created fake accounts posing as 13, 15, and 17 year old Nebraska residents. Within minutes of scrolling the “For You” feed—before searching for anything or following anyone—the teen accounts were fed posts that romanticized suicide and encouraged girls to starve themselves (in one study, researchers posing as 13 year old users encountered similar posts within minutes of opening an account.) Scrolling through Wednesday’s “Trending Today” page, I was bombarded by, among other things, a painfully skinny girl preening for the camera. Besides that, everyone on TikTok seems to have a shiny, unreal, or dazed quality, as if they have lost their grip on reality—or, rather, as if performing for disembodied strangers has become their new reality.

Unsurprisingly, TikTok is also associated with supercharging the spread of sociogenic disorders such as gender dysphoria. A New York Post article reports that many teens say TikTok influencers were determinative in their decision to transition. Between 2009 and 2019, referrals for transition therapy in the U.K. increased 10-fold among boys and 44-fold among girls, a rise made all the more astonishing by the fact that, historically, boys were more likely to transition than girls. Needless to say, apps like TikTok are implicated.

Beyond gender dysphoria specifically, TikTok promotes the spread of mental illness generally by encouraging the medicalization of everyday symptoms, and providing loners and outcasts a sense of community rooted in a shared identity (even if that identity is literally being mentally ill). As Jonathan Haidt writes in The Anxious Generation, “Anyone who revealed an interest in mental health was soon inundated with videos of other teens displaying mental illness and receiving social support for doing so.”

He cites Dissociative Identity Disorder as an example:14

DID used to be rare, but since the arrival of TikTok, there has been an increase, primarily among adolescent girls. Influencers portraying multiple personalities have attracted millions of followers, contributing to a trend of self-identifying with the disorder. Asher, a TikTok personality who describes themself as one of a “system” of 29 identities, has amassed more than 1.1 million followers.

As with gender dysphoria, the spread of DID in a historically resistant population (girls) points to social media as the decisive factor. Jonathan Haidt breaks down the social forces at play on TikTok that, in conjunction with the plasticity of the adolescent mind, make the app ripe for the spread of sociogenic illnesses (that is, illnesses “generated by social forces”):15

People pick up emotions from others, and emotional contagion is especially strong among girls. Second, there is “prestige bias”[:] Don’t just copy anyone; first find out who the most prestigious people are, then copy them. But on social media, the way to gain followers and likes is to be more extreme, so those who present with more extreme symptoms are likely to rise fastest, making them the models that everyone else locks onto for social learning.

In other words, humans learn by imitating each other, and when “extreme” personalities become the quintessential role models, extreme behavior—ranging from the depressive to the manic—becomes mainstream.

Loss of innocence

The final and perhaps most disturbing harm posed by TikTok is the destruction of innocence. Once again, TikTok developers are to blame more than anyone else. Even when faced with mounting evidence of harm, internal memos display a frightening disregard for the safety of TikTok’s (underage) users.

Users are regularly sent pornographic content, with Jonathan Haidt citing page 35-36 and 43-50 of the Nebraska brief as evidence. Many of the examples are too graphic to repost here, so I will include the Nebraska brief’s description of how virtual prostitutes use TikTok for self-promotion:16

One article summarizes the strategy: Porn stars are advised to “create engaging videos that tease your content and provide “sneak peeks” and then “share your Instagram link in your bio and from there to your Onlyfans account.” The “ultimate goal” is to “funnel TikTok viewers to OnlyFans, using platforms like Instagram as intermediaries.” With this strategy, they can “gain a significant following and drive traffic to [their] OnlyFans.

Another example of TikTok’s porn-to-post pipeline is the app’s LIVE feature, which allowed creators as young as 16 to stream live video of themselves to an audience of strangers. TikTok employees knew that it was “irresponsible” to expect LIVE creators to use the feature responsibly (remember, minors lack the “executive function” needed to self-regulate). But even as creators were flouting TikTok’s rules against sexually explicit content and adults were making sexual advances through lewd comments and virtual “gifts”, TikTok waited 6 months before raising the minimum age at which TikTokers can use LIVE from 16 to 18. The prevailing sentiment was that “LIVE was too profitable to be interfered with, even to protect children”, including hundreds of thousands of 13-15 year olds who used LIVE after bypassing age restrictions.17

Of course, TikTok was never eager to protect their users from immorality. Before the LIVE fiasco, in 2020 TikTok introduced a program featuring “policy shortcuts like ‘delayed enforcement, ‘deferred policy decisions’, or ‘no permanent ban on Elite + Accounts’ to protect its popular users who violate TikTok’s policies”, no matter that “a majority of elite accounts appear to run afoul of [TikTok’s] policies on sexually explicit content.”18 (For context, approximately 1,400 minors were considered “elite creators”.)

Likewise, TikTok’s (mostly non-human) moderators were never effective at filtering out the worst the app has to offer; the leakage rate—the percentage of harmful content that slips through unnoticed by the mods—is 30% or more. The leakage rates for various types of sexual content, as quoted by the Kentucky brief, are as follows: 35% of “Normalization of Pedophilia”, 33% of “Minor Sexual Solicitation”, 39% of “Minor Physical Abuse”, 30% of “Leading Minors off Platform”, 50% of “Glorification of Minor Sexual Assault”, and 100% of “Fetishizing Minors.”19

Indeed, the thousands of 12-13 year olds who ignored TikTok’s unenforceable age-gating system and opened accounts were also, more than likely, opening themselves to sexual exploitation.

TikTok is not unique

Many may be tempted to read about TikTok’s abuses and pretend as if other social media companies don’t play by the same rulebook. Allow me to enlighten: they do.

TikTok is not unique in its desire to add to its Daily Active Users and hook them to its algorithm, offering millions of impressionable teens a safer version of crack cocaine. Facebook, the parent company of Instagram, has been exposed as having done the exact same thing—conducting internal investigations into the addictive nature of Instagram while repeatedly refusing to tell its users about what their product is doing to their brains. YouTube only incorporated “YouTube Reels” after noticing TikTok’s success in lulling their users into a trancelike state via 30 second videos. Every major social media app is modeled after a slot-machine—providing rewards at irregular intervals—because no other device has proven to be more addictive. Every feature of social media—from push notifications, to infinite scroll, to “streaks”—has been carefully designed to exploit a weakness in human psychology. Such addictive technologies will only become more effective as tech giants increase their user base and, by extension, the pool of user data at their disposal.

Neither is TikTok is not unique as a porn-to-post pipeline. In fact, in a online article cited by Kentucky investigators (quoted above), prostitutes are encouraged to lure people to their porn sites using Instagram as one of many intermediaries. Moderators have no way of knowing that users are telling the truth about their age. As a result, millions of kids are exposed to soft core pornography on Twitter, Instagram, and even Snapchat. Content that is not flagged as pornography can easily slip through the cracks, such as when a video came up on my feed in which women were protesting the War in Ukraine, topless except for yellow and blue body paint (I now no longer use Twitter).

Finally, TikTok is far from unique in slow-walking the implementation of safety features. Instagram is just as bad. Instagram developers realize that “likes” and cosmetic filters enforce a status hierarchy that makes teen girls feel like garbage, yet have done little to limit their use. Even after facing congressional scrutiny over their business practices, developers planned to release a version of Instagram tailor-made for children (they have since “paused” development on Instagram Kids). Snapchat knows that cosmetic filters can cause body dysmorphia in teen girls—some of whom have undergone cosmetic surgery to look like the filtered version of themselves—yet the feature remains a staple of the app.20 When these companies do implement safety features, they, like TikTok, make sure that such measures have “no impact on retention.” Of course, this is a fallacy: incorporating safety features that fail to address the root problem—addiction to the app itself—is like giving a bomb victim Tylenol.

Indeed, for all their high-sounding rhetoric about making their products safer, Silicon Valley’s addiction technologies are still working fabulously. In 2022, 19% of American teens said they used YouTube “almost constantly”; the number is lower but still high for apps like Snapchat and Instagram, respectively 15% and 10%. Of the two-thirds of American teens who use social media at all, fully one-third say they are on it “almost constantly.” Facebook is so addictive that 1 in 8 users (360 million people) report that it interferes with “work, parenting, and relationships.” (Facebook now boasts 3 billion active monthly users—nearly a third of the world’s population.) Before its ban, TikTok reached “saturation” with American teenagers—meaning, it literally could not penetrate further into that share of the market.

Social media companies are not our saviors—they cannot clean up the mess they have created, because none are willing to do what it takes. As one TikTok memo read, “we don’t want to [make changes] to the For You feed because it’s going to decrease engagement.” It is foolish to think that the same people who celebrate addictive behavior as evidence of their success will ever stop the bleeding. Social media companies will continue to play dice with kid’s lives as long as there’s money to be made. The question is, how passive will we be?

Frankly, I have heard from too many people that these apps pose “manageable” risks and that long-term use is fine. Research and the private concerns of tech developers show that assumption to be dead wrong. These apps cannot be used in isolation from the patterns of thinking and being they create. Addiction, indecency, and brain fog are part of the package. The ban against TikTok is an important first step, but cognizance of our own susceptibility to social media’s designs—and a refusal to offload the problem to Generation Alpha—is what will make true reform possible.

Jonathan Haidt and Jack Rausch assembled the quotes in a long essay for their Substack (linked in the introduction), from which I have drawn heavily.

Kentucky brief, p. 7

Kentucky brief, p. 8

Nebraska brief, p. 21

Nevada brief, p. 27

Nebraska brief, p. 33

Nevada brief, p. 28

See Jonathan Haidt’s article, cited in the intro.

According to the New York Times, the average TikTok user spends 96 minutes on the app per day–more than any other social media app.

Kentucky brief, p. 65

Kentucky brief, p. 84

The Anxious Generation, p. 164

The Anxious Generation, p. 162-163

p. 34

Utah brief, p. 4, 36

Utah brief, p. 44-45

Kentucky brief, p. 106

Also known as “Snapchat dysmorphia” Here’s a quote from the study explaining the disorder: “Body dysmorphic disorder (BDD) is an excessive preoccupation with a perceived flaw in appearance, often characterized by people going to great – and at times unhealthy – lengths to hide their imperfections… teen girls who manipulated their photos were more concerned with their body appearance, and those with dysmorphic body image seek out social media as a means of validation. Additional research has shown 55 percent of plastic surgeons report seeing patients who want to improve their appearance in selfies.”

Yes, exactly! Not to mention the Chinese version of the TikTok algorithm promotes educational content for children…